Hey,

When the code below is executed, the final step of masking does not work:

# Does not work!!

masked = (s1_s2_water).mask(exclusion_mask.resample_cube_spatial(s1_s2_water))

All previous steps work and looks good so it should not be a problem. I have tried multiple thing but none of them works.

-

both layers (

s1_s2_water, exclusion_mask )look fine, as you can see below -

resample_cube_spatialwas applied but no success -

resample_cube_spatialwas NOT applied but no success. -

masked = (s1_s2_water).mask(exclusion_mask*1.0)it does not work

What could be a problem? Could you have a look @jeroen.dries or @stefaan.lippens ?

The full code:

start_date = '2021-06-01'

spatial_extent = {'west': -74.06810760, 'east': -73.90597343, 'south': 4.689864510, 'north': 4.724080996, 'crs': 'epsg:4326'} #colombia

zone = "tropical"

start_date_dt_object = datetime.strptime(start_date, '%Y-%m-%d')

end_date = (start_date_dt_object + relativedelta(months = +1)).date() ## End date, 1 month later (1st Feb. 2021)

start_date_exclusion = (start_date_dt_object + relativedelta(months = -1)).date()

bands = ['B02', 'B03', 'B04', 'B08', 'CLP', 'SCL' , 'sunAzimuthAngles', 'sunZenithAngles']

LOOKUPTABLE = {

'tropical':

{

'S1': '1 / (1 + exp(- (-7.17 + (-0.48 * VV))))',

'S2': '1 / (1 + exp(- (0.845 + (2.14 * NDVI) + (13.5 * NDWI))))',

'S1_S2':'1 / (1 + exp(- (-2.64 + (-0.23 * VV) + (8.6 * NDWI))))'

},

'subtropical':

{

'S1': '1 / (1 + exp(- (-8.1 + (-0.13 * VV) + (-0.27 * VH))))',

'S2': '1 / (1 + exp(- (0.845 + (2.14 * NDVI) + (13.5 * NDWI))))',

'S1_S2':'1 / (1 + exp(- (-2.64 + (-0.23 * VV) + (8.6 * NDWI))))'

}

}

s2_cube = connection.load_collection(

'SENTINEL2_L2A_SENTINELHUB',

spatial_extent = spatial_extent,

temporal_extent = [start_date_exclusion, end_date],

bands = bands)

# Scene classification data, based on Sen2Cor

# scl == 3 Cloud Shadows

# scl == 8 Clouds medium probability

# scl == 9 Clouds high probability

# scl == 10 Cirrus

# scl == 11 Snow / Ice

scl = s2_cube.band("SCL")

mask_scl = (scl == 3) | (scl == 8) | (scl == 9) | (scl == 10) |(scl == 11)

# mask {1 = cloud, 0 = free cloud}

s2_cube = s2_cube.mask(mask_scl)

# CLP (cloud probabilities) based on S2cloudless (160 blocks and 10m resolution)

clp = s2_cube.band("CLP")

mask_clp = (clp / 255) > 0.3

# mask {1 = cloud, 0 = free cloud}

s2_cube = s2_cube.mask(mask_clp)

s2_count = s2_cube.filter_bands(bands = ["B08"])

s2_count = s2_count.reduce_dimension(reducer=lambda data: data.count(), dimension = "t")

s2_count = s2_count.rename_labels("bands",["count"])

s2_cube = append_index(s2_cube,"NDWI") ## index 8

s2_cube = append_index(s2_cube,"NDVI") ## index 9

def water_function(data):

NDWI = array_element(data, index = 8)

NDVI = array_element(data, index = 9)

water = eval(LOOKUPTABLE[zone]['S2'])

return water

s2_cube_water = s2_cube.reduce_dimension(reducer = water_function, dimension = "bands")

s2_cube_water = s2_cube_water.add_dimension("bands", "water_prob", type = "bands")

s2_cube_water_threshold = s2_cube_water.apply_dimension(dimension="bands", process=lambda x: if_(x > 0.75, x,0))

s2_cube_water_threshold = s2_cube_water_threshold.rename_labels("bands",["w_T75"])

s2_cube_water_sum = s2_cube_water_threshold.reduce_dimension(reducer = "sum", dimension = "t")

s2_cube_water_sum = s2_cube_water_sum.rename_labels("bands",["sum"])

s2_cube_swf = s2_cube_water_sum.resample_cube_spatial(s2_count) / s2_count

s2_cube_swf = s2_cube_swf.rename_labels("bands",["swf"])

## calculate the median s2 water probability for 1-month.

s2_median_water = s2_cube_water.filter_temporal([start_date, end_date]).median_time()

s2_cube_median = s2_cube.filter_temporal([start_date, end_date]).median_time()

## Get Sentinel-1 data for a 1 month window and convert to ARD data.

s1_cube = connection.load_collection(

'SENTINEL1_GRD',

spatial_extent = spatial_extent,

temporal_extent = [start_date, end_date],

bands = ['VH','VV'],

properties = {"polarization": lambda p: p == "DV"})

s1_cube = s1_cube.ard_normalized_radar_backscatter(elevation_model="COPERNICUS_30")

s1_cube = s1_cube.rename_labels("bands",["VH","VV","mask", "incidence_angle"])

s1_cube_mask = s1_cube.band("mask")

# radar shadow mask has value 2

s1_mask_RS= (s1_cube_mask == 2)

s1_cube = s1_cube.mask(s1_mask_RS)

def log_(x):

return 10*log (x, 10)

s1_median = s1_cube.median_time().apply(log_)

def s1_water_function(data):

VV = array_element(data, index = 1)

water = eval(LOOKUPTABLE[zone]['S1'])

return water

s1_median_water = s1_median.reduce_dimension(reducer = s1_water_function, dimension = "bands")

exclusion_mask = (s1_median_water.resample_cube_spatial(s2_cube_swf) > 0.5) & (s2_cube_swf < 0.33)

exclusion_mask = exclusion_mask * 1.0

s1_median_water_mask = s1_median_water.mask(exclusion_mask.resample_cube_spatial(s1_median_water))

s1_median_water_mask = s1_median_water_mask * 1.0

def s1_s2_water_function (dc):

VV = array_element(dc, index = 0)

NDWI = array_element(dc, index = 1)

water = eval(LOOKUPTABLE[zone]['S1_S2'])

return water

## Get S1 VV, and S2 NDWI, and apply s1_s2_water function

s1_s2_cube = s1_median.filter_bands(['VV']).resample_cube_spatial(s2_cube_median).merge_cubes(s2_cube_median.filter_bands(['NDWI'])) # works

s1_s2_water = s1_s2_cube.reduce_dimension(reducer = s1_s2_water_function, dimension = "bands")

# Does not work!!

masked = (s1_s2_water).mask(exclusion_mask.resample_cube_spatial(s1_s2_water))

# Downloading Data

s1_s2_masked_save = masked.save_result(format='netCDF') #GTiff #netCDF

my_job = s1_s2_masked_save.send_job(title="masked")

results = my_job.start_and_wait().get_results()

results.download_files('masked')

The s1_s2_water and exclusion_mask layers have the same spatial extent and one band.

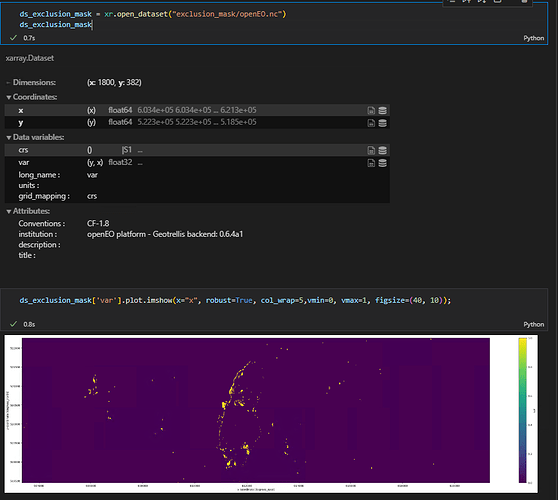

exclusion_mask:

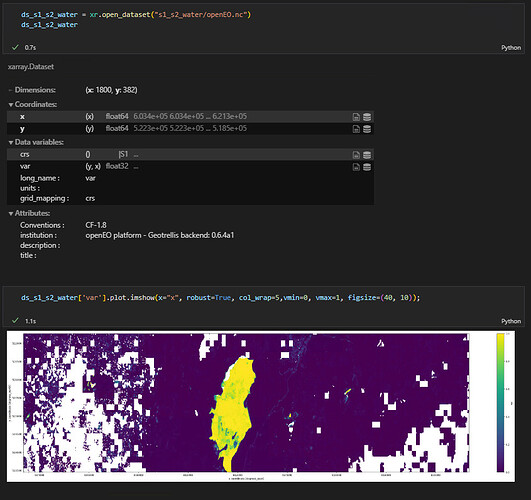

s1_s2_water:

The error message:

error processing batch job Traceback (most recent call last): File "batch_job.py", line 315, in main run_driver() File "batch_job.py", line 288, in run_driver run_job( File "/opt/venv/lib64/python3.8/site-packages/openeogeotrellis/utils.py", line 48, in memory_logging_wrapper return function(*args, **kwargs) File "batch_job.py", line 347, in run_job result = ProcessGraphDeserializer.evaluate(process_graph, env=env, do_dry_run=tracer) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 322, in evaluate return convert_node(result_node, env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1389, in apply_process the_mask = convert_node(mask_node,env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1391, in apply_process args = {"data": convert_node(args["data"], env=env), "mask":the_mask } File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1389, in apply_process the_mask = convert_node(mask_node,env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in apply_process args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1394, in <dictcomp> args = {name: convert_node(expr, env=env) for (name, expr) in sorted(args.items())} File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 333, in convert_node return convert_node(processGraph['node'], env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 328, in convert_node return apply_process( File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 1489, in apply_process return process_function(args=args, env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo_driver/ProcessGraphDeserializer.py", line 493, in load_collection return env.backend_implementation.catalog.load_collection(collection_id, load_params=load_params, env=env) File "/opt/venv/lib64/python3.8/site-packages/openeo/util.py", line 363, in wrapper return f(*args, **kwargs) File "/opt/venv/lib64/python3.8/site-packages/openeogeotrellis/layercatalog.py", line 523, in load_collection pyramid = sentinel_hub_pyramid() File "/opt/venv/lib64/python3.8/site-packages/openeogeotrellis/layercatalog.py", line 453, in sentinel_hub_pyramid pyramid_factory.datacube_seq(projected_polygons_native_crs.polygons(), File "/opt/spark3_2_0/python/lib/py4j-0.10.9.2-src.zip/py4j/java_gateway.py", line 1309, in __call__ return_value = get_return_value( File "/opt/spark3_2_0/python/lib/py4j-0.10.9.2-src.zip/py4j/protocol.py", line 326, in get_return_value raise Py4JJavaError( py4j.protocol.Py4JJavaError: An error occurred while calling o1629.datacube_seq. : org.apache.spark.SparkException: Job aborted due to stage failure: Task 0 in stage 50.0 failed 4 times, most recent failure: Lost task 0.3 in stage 50.0 (TID 174) (epod052.vgt.vito.be executor 4): java.lang.IllegalArgumentException: Invalid band index 5, only 4 bands available. at org.openeo.geotrellis.OpenEOProcessScriptBuilder.$anonfun$arrayElementFunction$2(OpenEOProcessScriptBuilder.scala:1137) at org.openeo.geotrellis.OpenEOProcessScriptBuilder$.org$openeo$geotrellis$OpenEOProcessScriptBuilder$$evaluateToTiles(OpenEOProcessScriptBuilder.scala:200) at org.openeo.geotrellis.OpenEOProcessScriptBuilder.$anonfun$xyFunction$2(OpenEOProcessScriptBuilder.scala:506) at org.openeo.geotrellis.OpenEOProcessScriptBuilder$.org$openeo$geotrellis$OpenEOProcessScriptBuilder$$evaluateToTiles(OpenEOProcessScriptBuilder.scala:200) at org.openeo.geotrellis.OpenEOProcessScriptBuilder.$anonfun$xyFunction$2(OpenEOProcessScriptBuilder.scala:506) at org.openeo.geotrellis.OpenEOProcessScriptBuilder$.org$openeo$geotrellis$OpenEOProcessScriptBuilder$$evaluateToTiles(OpenEOProcessScriptBuilder.scala:200) at org.openeo.geotrellis.OpenEOProcessScriptBuilder.$anonfun$xyFunction$2(OpenEOProcessScriptBuilder.scala:506) at org.openeo.geotrellis.OpenEOProcessScriptBuilder$.org$openeo$geotrellis$OpenEOProcessScriptBuilder$$evaluateToTiles(OpenEOProcessScriptBuilder.scala:200) at org.openeo.geotrellis.OpenEOProcessScriptBuilder.$anonfun$xyFunction$2(OpenEOProcessScriptBuilder.scala:506) at org.openeo.geotrellis.OpenEOProcessScriptBuilder$.org$openeo$geotrellis$OpenEOProcessScriptBuilder$$evaluateToTiles(OpenEOProcessScriptBuilder.scala:200) at org.openeo.geotrellis.OpenEOProcessScriptBuilder.$anonfun$xyFunction$2(OpenEOProcessScriptBuilder.scala:506) at org.openeo.geotrellis.OpenEOProcessScriptBuilder$.$anonfun$wrapProcessWithDefaultContext$1(OpenEOProcessScriptBuilder.scala:46) at org.openeo.geotrellis.OpenEOProcesses.$anonfun$mapBandsGeneric$2(OpenEOProcesses.scala:398) at org.apache.spark.rdd.PairRDDFunctions.$anonfun$mapValues$3(PairRDDFunctions.scala:751) at scala.collection.parallel.IterableSplitter$Mapped.next(RemainsIterator.scala:475) at org.apache.spark.scheduler.Task.run(Task.scala:131) at org.apache.spark.executor.Executor$TaskRunner.$anonfun$run$3(Executor.scala:506) at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1462) at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:509) at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1128) at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:628) at java.base/java.lang.Thread.run(Thread.java:829) Driver stacktrace: at org.apache.spark.scheduler.DAGScheduler.failJobAndIndependentStages(DAGScheduler.scala:2403) at org.apache.spark.scheduler.DAGScheduler.$anonfun$abortStage$2(DAGScheduler.scala:2352) at org.apache.spark.scheduler.DAGScheduler.$anonfun$abortStage$2$adapted(DAGScheduler.scala:2351) at scala.collection.mutable.ResizableArray.foreach(ResizableArray.scala:62) at scala.collection.mutable.ResizableArray.foreach$(ResizableArray.scala:55) at scala.collection.mutable.ArrayBuffer.foreach(ArrayBuffer.scala:49) at org.apache.spark.scheduler.DAGScheduler.abortStage(DAGScheduler.scala:2351) at org.apache.spark.scheduler.DAGScheduler.$anonfun$handleTaskSetFailed$1(DAGScheduler.scala:1109) at org.apache.spark.scheduler.DAGScheduler.$anonfun$handleTaskSetFailed$1$adapted(DAGScheduler.scala:1109) at scala.Option.foreach(Option.scala:407) at org.apache.spark.scheduler.DAGScheduler.handleTaskSetFailed(DAGScheduler.scala:1109) at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.doOnReceive(DAGScheduler.scala:2591) at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:2533) at org.apache.spark.scheduler.DAGSchedulerEventProcessLoop.onReceive(DAGScheduler.scala:2522) at org.apache.spark.util.EventLoop$$anon$1.run(EventLoop.scala:49) at org.apache.spark.scheduler.DAGScheduler.runJob(DAGScheduler.scala:898) at org.apache.spark.SparkContext.runJob(SparkContext.scala:2214) at org.apache.spark.SparkContext.runJob(SparkContext.scala:2235) at org.apache.spark.SparkContext.runJob(SparkContext.scala:2254) at org.apache.spark.SparkContext.runJob(SparkContext.scala:2279) at org.apache.spark.rdd.RDD.count(RDD.scala:1253) at org.openeo.geotrellissentinelhub.PyramidFactory.datacube_seq(PyramidFactory.scala:341) at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at java.base/jdk.internal.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.base/java.lang.reflect.Method.invoke(Method.java:566) at py4j.reflection.MethodInvoker.invoke(MethodInvoker.java:244) at py4j.reflection.ReflectionEngine.invoke(ReflectionEngine.java:357) at py4j.Gateway.invoke(Gateway.java:282) at py4j.commands.AbstractCommand.invokeMethod(AbstractCommand.java:132) at py4j.commands.CallCommand.execute(CallCommand.java:79) at py4j.ClientServerConnection.waitForCommands(ClientServerConnection.java:182) at py4j.ClientServerConnection.run(ClientServerConnection.java:106) at java.base/java.lang.Thread.run(Thread.java:829) Caused by: java.lang.IllegalArgumentException: Invalid band index 5, only 4 bands available. at org.openeo.geotrellis.OpenEOProcessScriptBuilder.$anonfun$arrayElementFunction$2(OpenEOProcessScriptBuilder.scala:1137) at org.openeo.geotrellis.OpenEOProcessScriptBuilder$.org$openeo$geotrellis$OpenEOProcessScriptBuilder$$evaluateToTiles(OpenEOProcessScriptBuilder.scala:200) at org.openeo.geotrellis.OpenEOProcessScriptBuilder.$anonfun$xyFunction$2(OpenEOProcessScriptBuilder.scala:506) at org.openeo.geotrellis.OpenEOProcessScriptBuilder$.org$openeo$geotrellis$OpenEOProcessScriptBuilder$$evaluateToTiles(OpenEOProcessScriptBuilder.scala:200) at org.openeo.geotrellis.OpenEOProcessScriptBuilder.$anonfun$xyFunction$2(OpenEOProcessScriptBuilder.scala:506) at org.openeo.geotrellis.OpenEOProcessScriptBuilder$.org$openeo$geotrellis$OpenEOProcessScriptBuilder$$evaluateToTiles(OpenEOProcessScriptBuilder.scala:200) at org.openeo.geotrellis.OpenEOProcessScriptBuilder.$anonfun$xyFunction$2(OpenEOProcessScriptBuilder.scala:506) at org.openeo.geotrellis.OpenEOProcessScriptBuilder$.org$openeo$geotrellis$OpenEOProcessScriptBuilder$$evaluateToTiles(OpenEOProcessScriptBuilder.scala:200) at org.openeo.geotrellis.OpenEOProcessScriptBuilder.$anonfun$xyFunction$2(OpenEOProcessScriptBuilder.scala:506) at org.openeo.geotrellis.OpenEOProcessScriptBuilder$.org$openeo$geotrellis$OpenEOProcessScriptBuilder$$evaluateToTiles(OpenEOProcessScriptBuilder.scala:200) at org.openeo.geotrellis.OpenEOProcessScriptBuilder.$anonfun$xyFunction$2(OpenEOProcessScriptBuilder.scala:506) at org.openeo.geotrellis.OpenEOProcessScriptBuilder$.$anonfun$wrapProcessWithDefaultContext$1(OpenEOProcessScriptBuilder.scala:46) at org.openeo.geotrellis.OpenEOProcesses.$anonfun$mapBandsGeneric$2(OpenEOProcesses.scala:398) at org.apache.spark.rdd.PairRDDFunctions.$anonfun$mapValues$3(PairRDDFunctions.scala:751) at org.apache.spark.scheduler.Task.run(Task.scala:131) at org.apache.spark.executor.Executor$TaskRunner.$anonfun$run$3(Executor.scala:506) at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1462) at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:509) at java.base/java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1128) at java.base/java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:628) ... 1 more

’